UAVs or drones have the ability to reach locations that otherwise are difficult to reach with a camera or other sensor types. This allows efficient inspection and mapping of a wide range of objects and areas. Research in perception at SDU UAS Center mainly uses off-the-shelf components and UAVs such as the DJI Phantom for the data acquisition. This makes it easier to scale up systems to additional users, as the developed methods do not rely on hard-to-access research equipment.

Inspection tasks generate large amounts of data in the form of videos and still images, which are time-consuming to interpret manually. Our research focuses on automating the analysis and interpretation, so valuable information can be extracted from the gathered data.

Color analysis

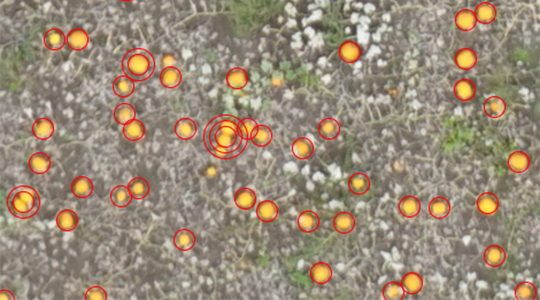

One large-scale inspection task is the research project “Drone-based pumpkin counting”, where we map several hundred thousand pumpkins in a field. The raw images acquired are of limited value to the farmer, but by interpreting the images it is possible to extract information about the total number of pumpkins and the density distribution in the field. Detailed information like this can help the farmer to optimize his business. To map the pumpkins we use a color analysis of the UAV images. By sampling colors from a few pumpkins, a color model of the typical pumpkin colors is generated. This color model is then used to segment the UAV recordings into orange pumpkin regions and black soil regions. Finally, the orange blobs are counted.

Recognition of textures

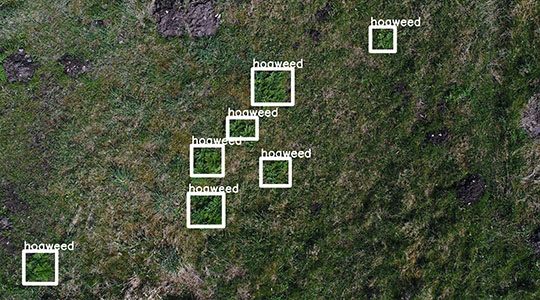

A color-based analysis is not sufficient to detect giant hogweed in UAV recordings, as this invasive plant species has colors that are similar to the plants they grow next to, e.g., grass. To detect these plants we use Convolutional Neural Networks (CNNs), as this tool is able to learn to recognize the characteristic texture of the giant hogweed leaves. This enables us to recognize giant hogweed at an early growth stage, where it is much easier to control effectively.

Related research projects

Square meter Farming (SqM-Farm)