In this project we are partnering with the pathological department at Odense University Hospital in an effort to increase digitization and automation in hospitals. We are developing and training complex deep neural networks to perform quality assessment of digitized tissue samples. The quality assessment is a process that is currently done manually by expert pathologists. If the project is successful, it could allow resources to be reallocated into other areas of pathological departments.

One of the main challenges in this project is the resolution of the digitized tissue samples; one image may contain tens of billions pixels (10¹⁰), and most deep neural networks are incapable of processing such large chunks of data, even on modern hardware. The most common solution to this problem is to downscale the images to a lower resolution. This would however cause a significant loss of information, which for applications in pathology could lead to an inability to perform the desired analysis, since it may be important to analyze the tissue at the cellular level.

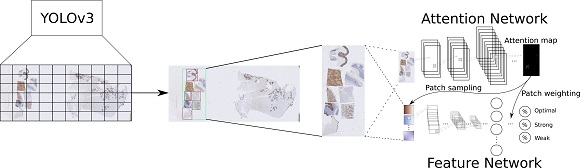

Our proposed solution to this problem is to utilize deep learning to isolate the parts of the tissue sample that we wish to perform quality assessment of and subsequently use an attention-based classification network to perform the quality assessment. To isolate the tissue we use a well-known object-detection neural network called YOLOv3. For the quality assessment we use a neural network architecture called an attention-sampling network. This network utilizes a sub-network (attention network) to predict the important locations of the tissue area and subsequently analyses patches from these locations with a second sub-network (feature network) to predict the quality of the tissue sample.

All of the data used to train the algorithms is produced for this project at the pathological department of Odense University Hospital.

The project is funded by The Region of Southern Denmark.

Contact:

Professor Thiusius Rajeeth Savarimuthu

Project Partners:

SDU Robotics, University of Southern Denmark, Denmark; Odense University Hospital, Denmark